The full 38-page White Paper includes:

The headlines say 80% of U.S. work can be automated. Yet only 17% of U.S. businesses have successfully deployed AI in any function.

This gap exists because governance—NOT technical capability—is the limiting factor.

We applied four operational constraints to 18,898 work tasks across 148 million U.S. workers.

The result: a precise map of where AI delegation is safe, where it's risky, and where it must remain human.

Our analysis started with the Bureau of Labor Statistics' O*NET task database of 18,898 tasks that define 848 jobs which we extended with governance constraint scoring.

We used a multi-model AI council (Claude, GPT-4, Gemini, Llama) to control for single-model bias, achieving inter-rater reliability that meets or exceeds academic standards.

What makes our approach unique:

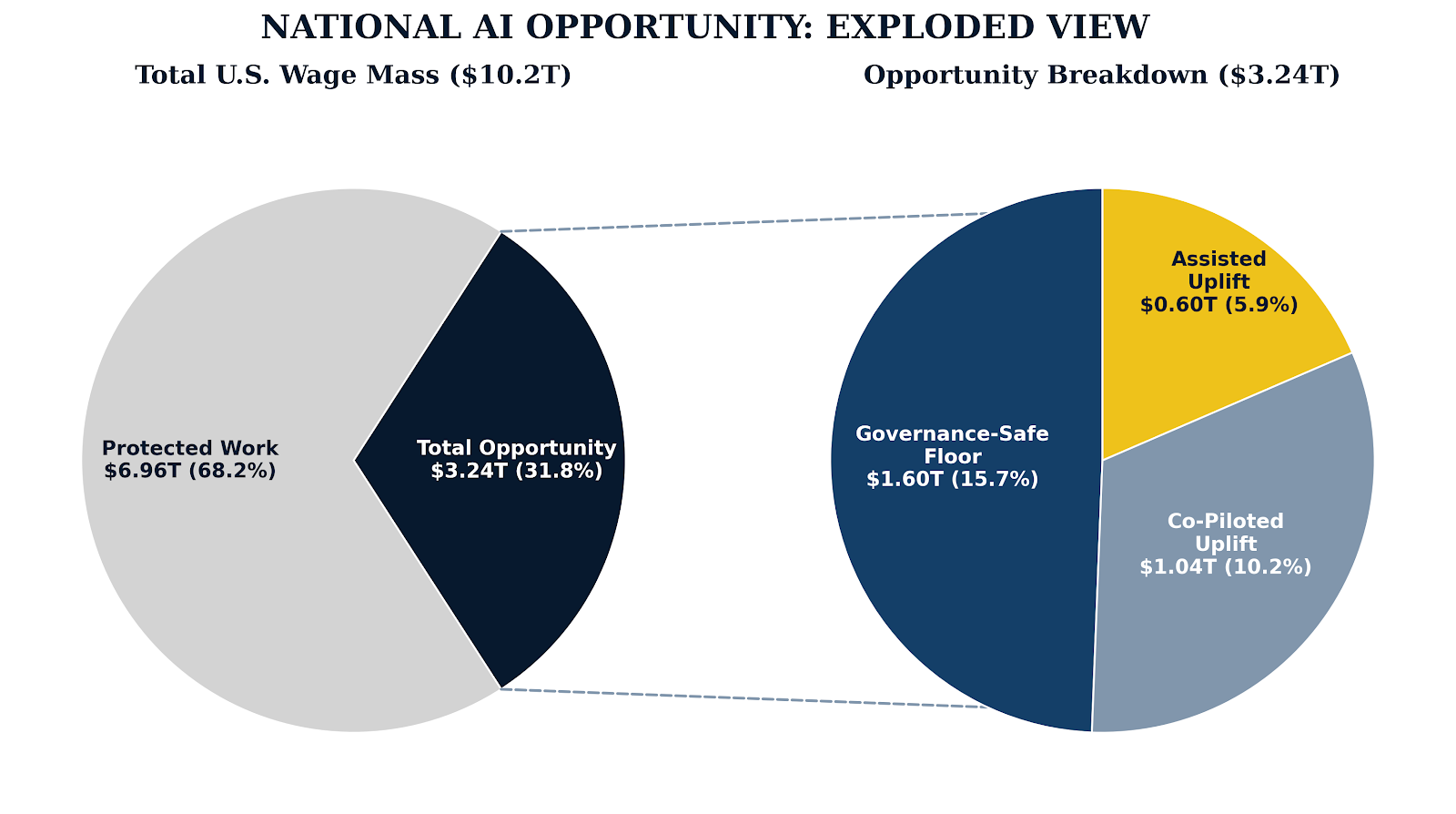

Only 15.7% of Work is Currently Exposed to AI Under Governance Constraints, with Another 16.1% of Opportunity

We estimate the governance-safe floor for AI automation in the U.S to be $1.6 trillion annually (15.7% of U.S. base wages), including $1.02 trillion in Core Work delegation (10.0% of wages) and $0.58 trillion in coordination efficiency (5.7% of wages).

We further estimate another $1.64 trillion in opportunity (16.1% of wages) for liberated human capital investment, including the following...

With the advent of ATMs and mobile banking, some thought that bank tellers were a job of the past, but not so. The bank teller who previously processed transactions became the advisor who solves problems. Similarly, the medical coder who looked up codes became the analyst who coaches physicians. The support agent who answered tickets became the relationship manager who prevents them.

This is role distillation: high-delegation tasks migrate to AI, while human work concentrates on judgment, accountability, and relationships.

But distillation doesn't happen automatically. Without deliberate planning, freed capacity gets absorbed by administrative drift. Leaders must identify where to reinvest before they deploy.

Failure Mode 1: Confusing Coordination with Core Work

Knowledge workers spend 57-60% of time on coordination work like emails, meetings, status updates. This is the low-hanging fruit. But most managers go straight for core work, where governance constraints bind hardest.

Failure Mode 2: Training for Fear Instead of Fluency

Most corporate AI training focuses on what not to do resulting in paralyzed non-users. Those that DO embrace AI do so without fluency training, producing reams of "slop" that clogs downstream processes.

Failure Mode 3: Chasing Moonshots Before Building Confidence

Core work integrations and high-risk use cases require organizational capabilities that don't exist yet—verification processes that haven't been designed, governance systems that haven't been tested, and cultural confidence that hasn't been earned.